The EUI Robert Schuman Centre for Advanced Studies’ working papers series has two interesting recent additions on the economic analysis of procurement regulation and its effects on competition, efficiency and value for money. Both papers are by BKO Tas.

The first paper: ‘Bunching Below Thresholds to Manipulate Public Procurement’ explores the effects of a contracting authority’s ‘bunching strategy’ to seek to exercise more discretion by artificially estimating the value of future contracts just below the thresholds that would trigger compliance with EU procurement rules. This paper is relevant to the broader discussion on the usefulness and adequacy of current EU (and WTO GPA) value thresholds (see eg the work of Telles, here and here), as well as on the regulatory decisions that EU Member States face on whether to extend the EU rules to ‘below-threshold’ contracts.

The second paper: ‘Effect of Public Procurement Regulation on Competition and Cost-Effectiveness’ uses the World Bank’s ‘Benchmarking Public Procurement’ quality scores to empirically test the positive effects of improved regulation quality on competition and value for money, measured as increases in the number of bidders and the probability that procurement price is lower than estimated cost. This paper is relevant in the context of recent discussions about the usefulness or not of procurement benchmarks, and regarding the increasing concern about reduced number of bids in EU-regulated public tenders.

In this blog post, I reflect on the methodology and insights of both papers, paying particular attention to the fact that both papers build on datasets and/or indexes (TED, the WB benchmark) that I find rather imperfect and unsuitable for this type of analysis (regarding TED, in the context of the Single Market Scoreboard for Public Procurement (SMPP) that builds upon it, see here; regarding the WB benchmark, see here). Therefore, not all criticisms below are to the papers themselves, but rather to the distortions that skewed, incomplete or misleading data and indicators can have on more refined analysis that builds upon them.

Bunching Below Thresholds to Manipulate Procurement (Tas: 2019a)

It is well-known that the EU procurement rules are based on a series of jurisdictional triggers and that one of them concerns value thresholds—currently regulated in Arts 4 & 5 of Directive 2014/24/EU. Contracts with an estimated value above those thresholds are subjected to the entire EU procurement regulation, whereas contracts of a lower value are solely subjected to principles-based requirements where they are of ‘cross-border interest’. Given the obvious temptation/interest in keeping procurement shielded from EU requirements, the EU Directives have included an anti-circumvention rule aimed at preventing Member States from artificially splitting contracts in order to keep their award below the relevant jurisdictional thresholds (Art 5(3) Dir 2014/24). This rule has been interpreted expansively by the Court of Justice of the European Union (see eg here).

‘Bunching Below Thresholds to Manipulate Public Procurement’ examines the effects of a practice that would likely infringe the anti-circumvention rule, as it assesses a strategy of ‘bunching estimated costs just below thresholds’ ‘to exercise more discretion in public procurement’. The paper develops a methodology to identify contracting authorities ‘that have higher probabilities of bunching estimated values below EU thresholds’ (ie manipulative authorities) and finds that ‘[m]anipulative authorities have significantly lower probabilities of employing competitive procurement procedure. The bunching manipulation scheme significantly diminishes cost-effectiveness of public procurement. On average, prices of below threshold contracts are 18-28% higher when the authority has an elevated probability of bunching.’ These are quite striking (but perhaps not surprising) results.

The paper employs a regression discontinuity approach to determine the likelihood of bunching. In order to do that, the paper relies on the TED database. The paper is certainly difficult to read and hardly intelligible for a lawyer, but there are some issues that raise important questions. One concerns the authors’ (mis)understanding of how the WTO GPA and the EU procurement rules operate, in particular when the paper states that ‘Contracts covered by the WTO GPA are subject to additional scrutiny by international organizations and authorities (sic). Accordingly, contracts covered by the WTO GPA are less likely to be manipulated by EU authorities’ (p. 12). This is simply an acritical transplant of considerations made by the authors of a paper that examined procurement in the Czech Republic, where the relevant threshold between EU covered and non-EU covered procurement would make sense. Here, the distinction between WTO GPA and EU-covered procurement simply makes no sense, given that WTO GPA and EU thresholds are coordinated. This alone raises some issues concerning the tests designed by the author to check the robustness of the hypothesis that bunching leads to inefficiency in procurement expenditure.

Another issue concerns the way in which the author equates open procedures to a ‘first price auction mechanism’ (which they are not exactly) and dismisses other procedures (notably, the restricted procedure) as incapable of ensuring value for money or, more likely, as representative of a higher degree of discretion for the contracting authority—which is a highly questionable assumption.

More importantly, I am not sure that the author understood what is in the TED database and, crucially, what is not there (see section 2 of Tas (2019a) for methodology and data description). Albeit not very clearly, the author presents TED as a comprehensive database of procurement notices—ie, as if 100% of procurement expenditure by Member States was recorded there. However, in the specific context of bunching below thresholds, the TED database is very likely to be incomplete.

Contracting authorities tendering contracts below EU thresholds are under no obligation to publish a contract notice (Art 49 Dir 2014/24). They could publish voluntarily, in particular in the form of a voluntary ex ante transparency (VEAT) notice, but that would make no sense from the perspective of a contracting authority that seeks to avoid compliance with EU rules by bunching (ie manipulating) the estimated contract value, as that would expose it to potential litigation. Most authorities that are bunching their procurement needs (or, in simple terms) avoiding compliance with the EU rules will not be reflected in the TED database at all, or will not be identified by the methodology used by Tas (2019a), as they will not have filed any notices for contracts below thresholds.

How is it possible that TED includes notices regarding contracts below the EU thresholds, then? Well, this is anybody’s guess, but mine is that a large proportion of those notices will be linked to either countries with a tradition of full transparency (over-reporting), to contracts where there are any doubts about the potential cross-border interest (sometimes assessed over-cautiously), or will be notices with mistakes, where the estimated value of the contract is erroneously indicated as below thresholds.

Even if my guess was incorrect and all notices for contracts with a value below thresholds were accurate and justified by the existence of a potential cross-border interest, the database cannot be considered complete. One of the issues raised (imperfectly) by the Single Market Scoreboard (indicator [3] publication rate) is the relatively low level of procurement that is advertised in TED compared to the (putative/presumptive) total volume of procurement expenditure by the Member States. Without information on the conditions of the vast majority of contract awards (below thresholds, unreported, etc), any analysis of potential losses of competitiveness / efficiency in public expenditure (due to bunching or otherwise) is bound to be misleading.

Moreover, Tas (2019a) is premised on the hypothesis that procurement below EU thresholds allows for significantly more discretion than procurement above those thresholds. However, this hypothesis fails to recognise the variety of transposition strategies at Member State level. While some countries have opted for less stringent below EU threshold regimes, others have extended the EU rules to the entirety of their procurement (or, perhaps, to contracts up to and including much lower values than the EU thresholds, to the exception of some class of ‘micropurchases’). This would require the introduction of a control that could refine Tas’ analysis and distinguish those cases of bunching that do lead to more discretion and those that do not (at least formally)—which could perhaps distinguish between price effects derived from national-only transparency from those of more legally-dubious maneuvering.

In my view, regardless of the methodology and the math underpinning the paper (which I am in no position to assess in detail), once these data issues are taken into account, the story the paper tries to tell breaks down and there are important shortcomings in its empirical strategy that, in my view, raise significant issues around the strength of its findings—assessed not against the information in TED, but against the (largely unknown, unrecorded) reality of procurement in the EU.

I have no doubt that there is bunching in practice, and that the intuition that it raises procurement costs must be right, but I have serious doubts about the possibility to reliably identify bunching or estimate its effects on the basis of the information in TED, as most culprits will not be included and the effects of below threshold (national) competition only will mostly not be accounted for.

(Good) Regulation, Competition & Cost-Effectiveness (Tas: 2019b)

It is also a very intuitive hypothesis that better regulation should lead to better procurement outcomes and, consequently, that more open and robust procurement rules should lead to more efficiency in the expenditure of public funds. As mentioned above, Tas (2019b) explores this hypothesis and seeks to empirically test it using the TED database and the World Bank’s Benchmarking Public Procurement (in its 2017 iteration, see here). I will not repeat my misgivings about the use of the TED database as a reliable source of information. In this second part, I will solely comment on the use of the WB’s benchmark.

The paper relies on four of the WB’s benchmark indicators (one further constructed by Djankov et al (2017)): the ‘bid preparation score, bid and contract management score, payment of suppliers score and PP overall index’. The paper includes a useful table with these values (see Tas (2019b: Table 4)), which allows the author to rank the countries according to the quality of their procurement regulation. The findings of Tas (2019b) are thus entirely dependent on the quality of the WB’s benchmark and its ability to capture (and distinguish) good procurement regulation.

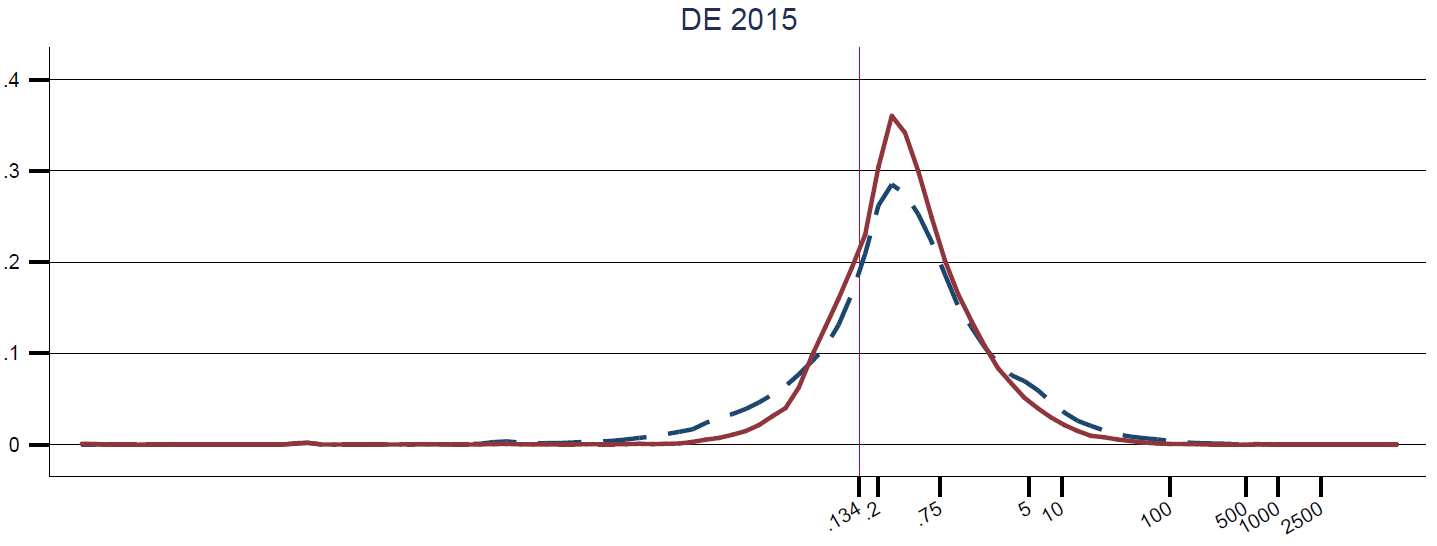

In order to test the extent to which the WB’s benchmark is a good input for this sort of analysis, I have compared it to the indicator that results from the European Commission’s Single Market Scoreboard for Public Procurement (SMSPP, in its 2018 iteration). The comparison is rather striking …